Doi:

Abstract:

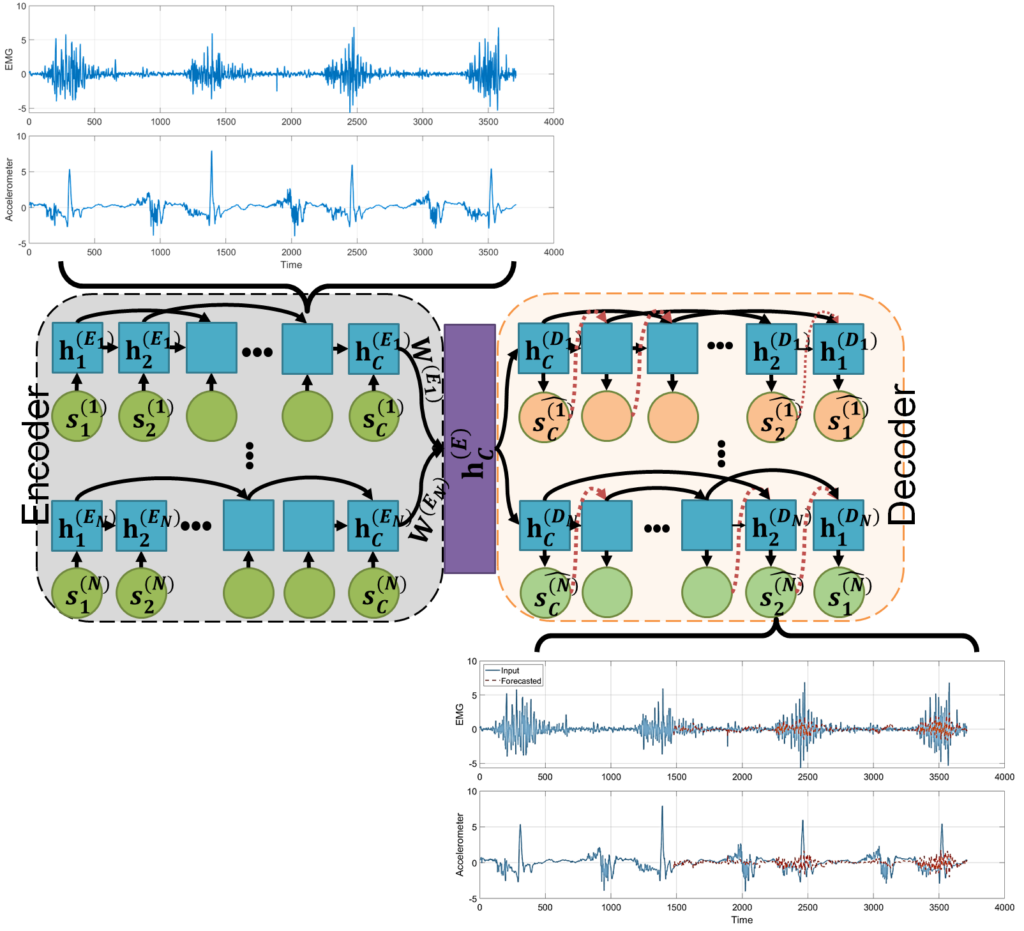

Body representations emerge through the integration of multisensory cues, facilitating interaction with the internal and external world. In this study, we empirically investigate the influence of integrating auditory cues with other multisensory and sensorimotor cues on perceptions of body size/weight. Participants (N=104) wore motion capture suits and wearable sensors while walking six times along a 10-meter-long trail and listening to their footstep sounds through headphones. We used low and high-pass filters during different trials to modulate the footstep sounds to create the illusion of heavier or lighter bodies. Additionally, a control condition without any filtered sound was included. Participants rated perceived weight on a scale of 1-7, completed an avatar task to assess their sense of embodiment concerning weight changes, and marked body maps to indicate changes in body perception. We employ cutting-edge Machine Learning (ML) methods to construct a discriminative latent space to establish associations between sensor data and self-reports. The results will present scientific findings concerning the influence of modulated footstep sounds on subjective experiences. The study will specifically examine participants’ perception of weight, their evaluation of their avatar’s body size, and the altered sensations experienced in various regions of their body maps. Our findings highlight the transformative potential of ML methods in creating a dynamic and individualised blueprint of people’s body representations, opening possibilities to develop a real-time measure of changes in body representations. This research paves the way to support the development of new health strategies for people with body representation disorders and related concerns.